Synthesizing human motion has advanced rapidly, yet realistic hand motion and bimanual interaction remain underexplored. Whole-body models often miss the fine-grained cues that drive dexterous behavior, finger articulation, contact timing, and inter-hand coordination, and existing resources lack high-fidelity bimanual sequences that capture nuanced finger dynamics and collaboration. To fill this gap, we collect a new dataset targeting these underrepresented aspects. For scalable annotation, we introduce a decoupled paradigm that extracts representative motion features, e.g., contact events and finger flexion, and then leverages reasoning from large language model to produce fine-grained, semantically rich descriptions aligned with these features. Building on the resulting data and annotations, we benchmark diffusion and autoregressive models with versatile conditioning modes. Experiments demonstrate high-quality dexterous motion generation, supported by our newly proposed hand-focused metrics. We further observe clear scaling trends: larger models trained on larger, higher-quality datasets produce more semantically coherent bimanual motions. All data will be released to support future research.

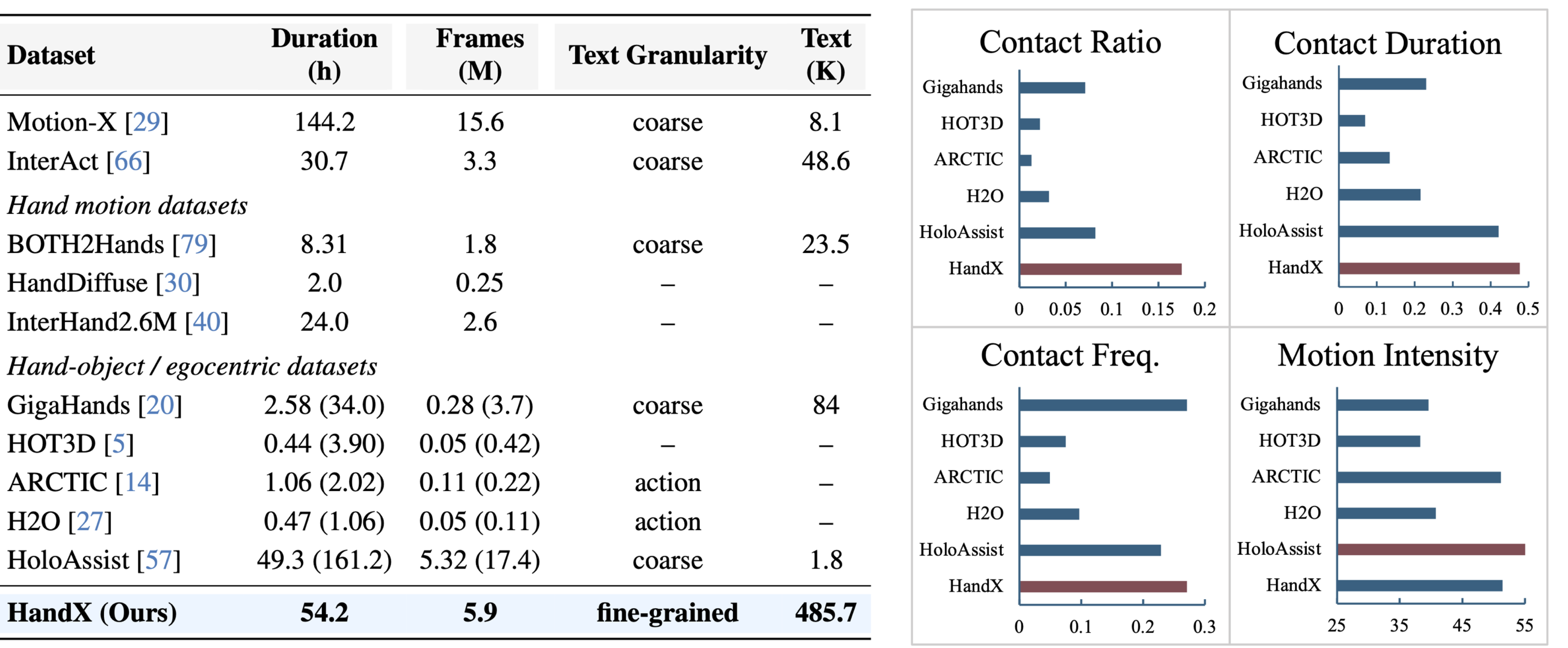

Left: Dataset scale and text supervision. HandX provides fine-grained, multi-level language descriptions. Right: Statistics of bimanual motion quality. HandX provides contact-rich bimanual motions.

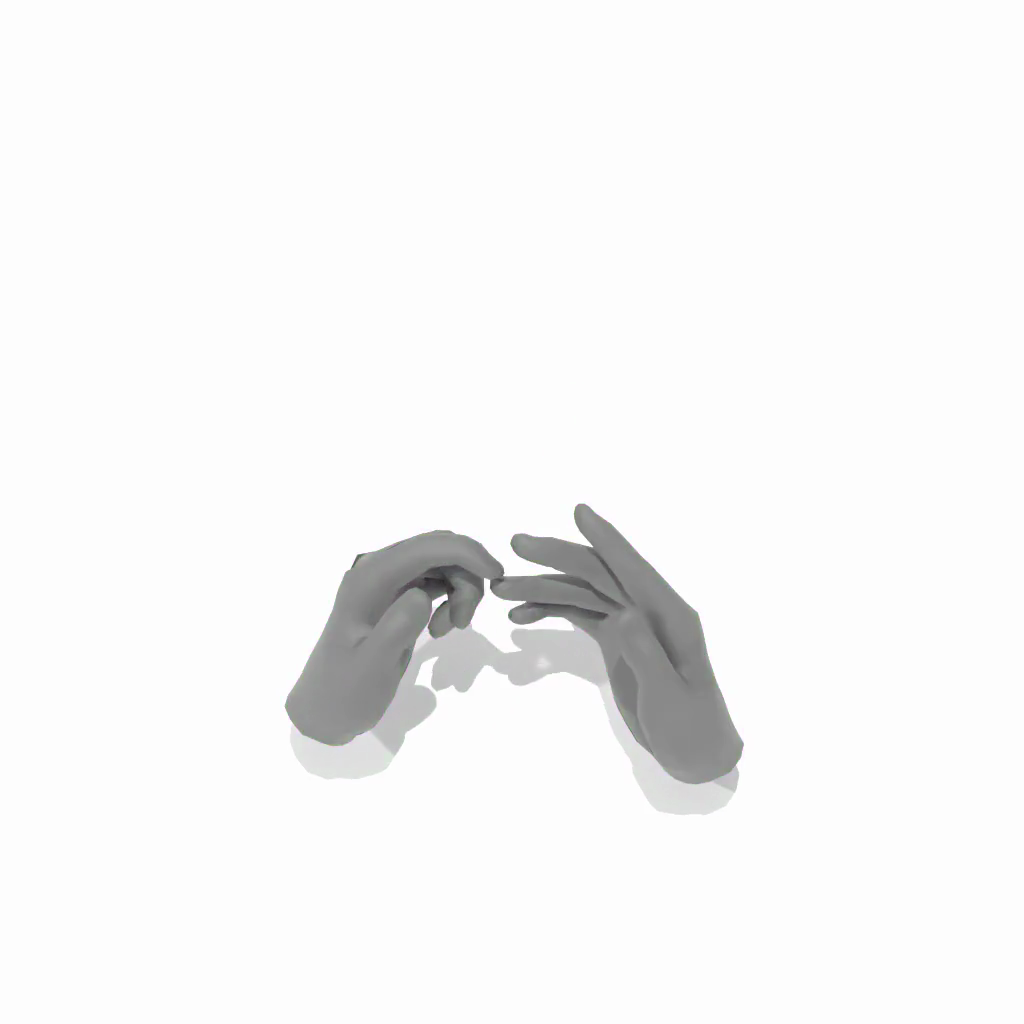

Left: The ring and pinky fingers perform a slow bending motion while thumb and middle stay extended.

Right: The hand transitions from closed to open as fingers straighten out.

Relation: Right fingertips contact the left palm, then break apart rapidly at the end.

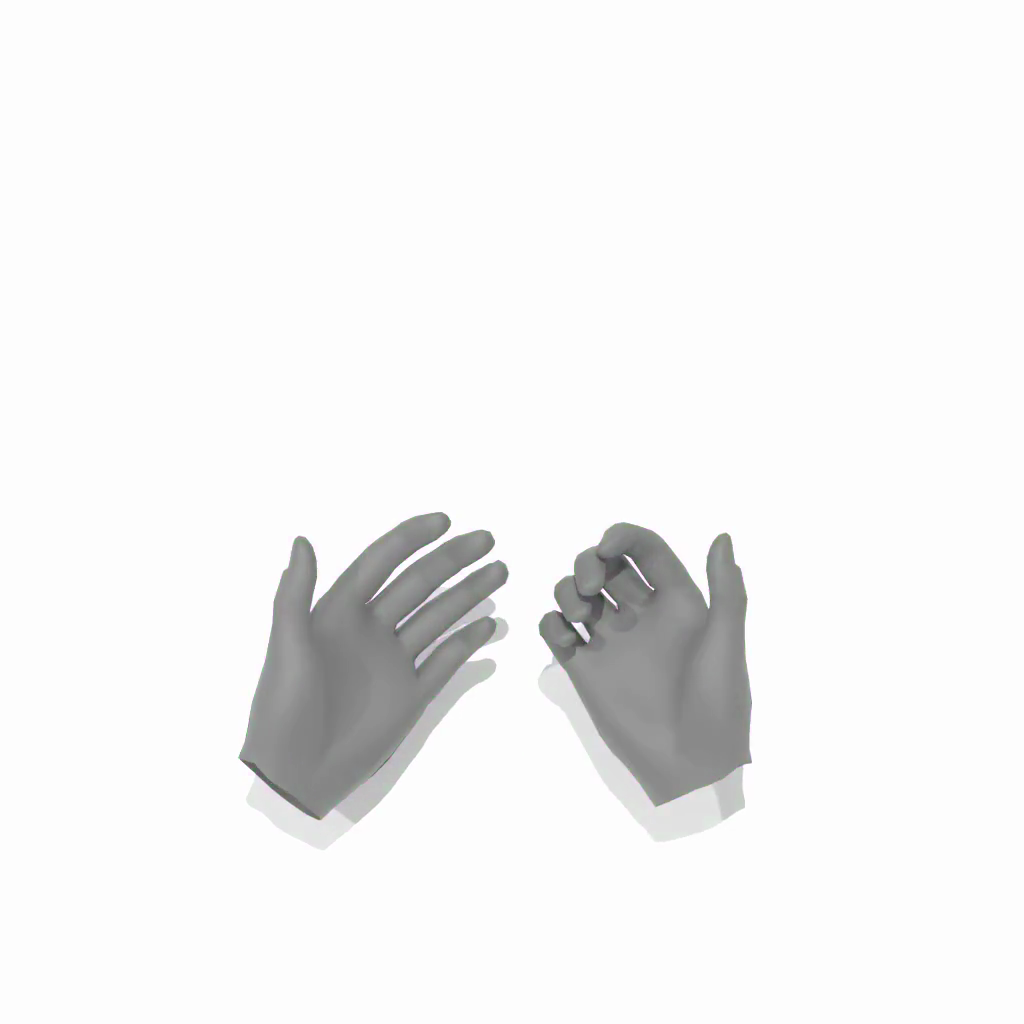

Left: All fingers held straight; index, middle, and ring fingers spread apart from a closed position.

Right: Flat and open hand; thumb performs a single bending motion mid-sequence.

Relation: Palms stay near and aligned with repeated tapping contacts between corresponding fingertips.

Left: Index finger transitions from partially bent to fully extended; wrist moves down and side-to-side.

Right: Index and middle fingers bend at the base while other fingers remain extended.

Relation: Left index tip contacts right palm, then right index tip contacts left palm as hands swap vertical positions.

Left: The hand performs a clench-and-release motion, bending rapidly then straightening.

Right: Fingers synchronously bend into a fist and extend again, mirroring the left hand.

Relation: Hands approach quickly, palms and fingertips make contact, then separate.

Left: Thumb extended, other fingers bent; thumb contacts all four fingertips.

Right: Fingers bend and straighten; thumb contacts ring, index, and middle tips.

Relation: Hands stay far apart; right hand positioned to the right and above.

Left: Hand transitions from open to closed; middle finger and thumb touch at the end.

Right: Fingers uncurl from bent to extended; thumb contacts index and middle tips.

Relation: Two thumb contacts at the beginning, then hands rapidly separate.

Left: Static pose with thumb extended and fingers bent; index-middle spacing closes.

Right: Thumb highly active, contacting index tip 3 times and middle tip 4 times.

Relation: Hands far apart and do not interact.

Left: Fingers bend and straighten; pinky hyperextends, ring finger contacts thumb.

Right: Fingers highly active; pinky hyperextends, thumb contacts middle and index tips.

Relation: Hands start at medium distance and move far apart.

The green section represents the first prompt's results, and the orange section represents the second prompt's results. The model ensures smooth transitions between segments.

@article{zhang2026handx,

title = {HandX: Scaling Bimanual Motion and Interaction Generation},

author = {Zhang, Zimu and Zhang, Yucheng and Xu, Xiyan and Wang, Ziyin and Xu, Sirui and Zhou, Kai and Zhou, Bing and Guo, Chuan and Wang, Jian and Wang, Yu-Xiong and Gui, Liang-Yan},

journal = {arXiv},

year = {2026},

}